I encountered recursion a few months ago for the first time in a problem I don't recall anymore. What I do remember is that I found this wonderful video that really helped drive home two essential recursion concepts for me: the concept of the stack, and the fact that recursive programs wait to complete their individual runs until they have the information they need from their base case. Here it is:

At the time I watched this video, my mind was completely blown to think about solving

a factorial problem with recursion. I had felt so proud of a one-line factorial solution I came

up with after learning about ranges

— (1..num).to_a.inject(:*) — and it made me feel pretty silly to see something like

this instead. I wrote my own recursive factorial for practice:

The concept came up again for me when I was working on my quicksort post, when

a stray comment on a YouTube quicksort algorithm tutorial made me realize that quicksort is better written

recursively than iteratively. It was difficult to grasp until I gave it a proper base case and added

puts statements to see how it was creating and dealing with the recursive stack (not sure

what to properly call it). The output made me notice that it looked a bit like a tree: branches and subbranches.

Understanding that kind of data structure is next on my list, but back to recursion.

In a video on algorithms and recursion that Dev Bootcamp made avaiable to my cohort today, I noticed that instead of writing a sorting or factorial algorithm to explain recursion, the instructor talked about palindromes. This was another confusion-inducing moment for me: how did the program know to stop at a case that tested false? How did it trickle down the results to the rest of the stack? Then I realized that it worked by making smaller and smaller slices of the original word, with a base case of a single letter being its own palindrome. Here's my attempt at it:

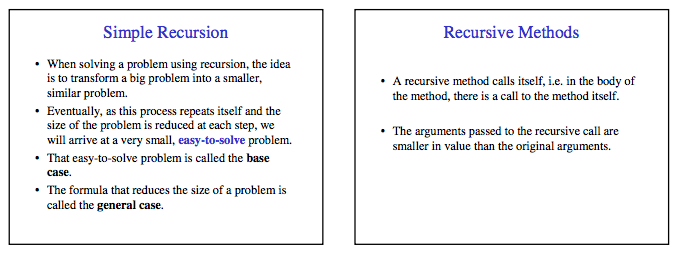

To summarize the essential qualities of recursion, I think this explanation is particularly simple and well written: